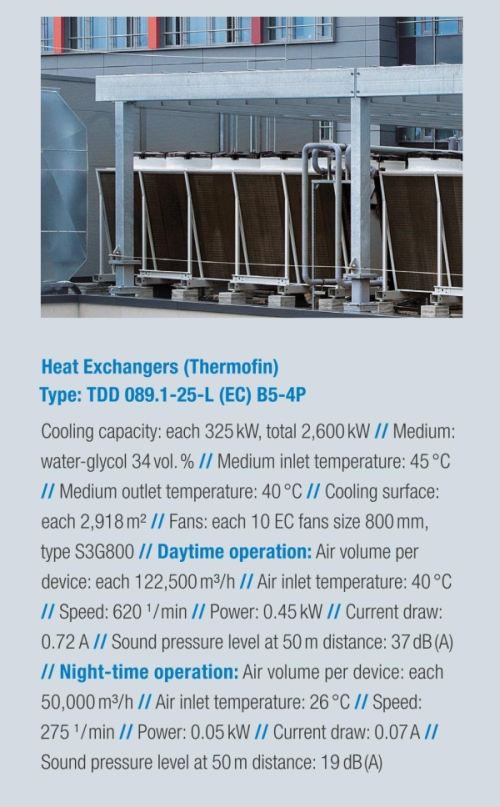

22 degrees Celsius, 55 percent air humidity; and that, preferably without any deviations, round the clock, 365 days per year. The supercomputers in the brand new Computer Centre of the German Weather Service have clear ideas on their own “comfortable climate”. When it is only a few degrees too warm for the computing components, they stop working. To avoid this happening, Michael Jonas, head of the German Meteorological Computer Centre (DMRZ), is relying upon a cleverly devised climatic concept. The visible icing on the cake of this cooling system sits enthroned at the very top, on the roof of the newly built weather headquarters: Eight mighty heat exchangers with a total capacity of 2,600 kilowatts absorb the concentrated thermal load from the computer centre, in which 80 large EC axial fans move an air volume of nearly one million cubic metres per hour, to allow the weather computers to stay cool and continue their computing. Neighbours in the surrounding residential area can nevertheless sleep soundly: thanks to the infinitely variable and whisper-quiet EC motors the sound pressure level at a 50-metre distance is only 19 dB(A), it therefore lies far below the legal requirement for residential areas.

And now for the weather

For a good weather forecast the modern meteorologist needs a lot of experience and a great deal of measured data, as well as one thing above all: computing power! Numeric weather models cover the globe with a finely meshed mathematical grid. The finer this digital fishnet stocking, the more realistic the results — and the greater the hunger for power. A hunger, however, that Michael Jonas can not quench without limits. As he is not only responsible for the technology, but also for the budget, he must keep an eye on the cost-effectiveness of the computer centre. This also applies to the cooling for the two computer centre rooms. In fact, the computers would prefer to be a few degrees cooler; the value of 22 degrees is a compromise between the current drain and the availability and reliability of the systems. On an area of over 1,000 square metres computers line up next to computers, racks to racks, cabinets to cabinets, and it is continuously expanding. As a comparison: in 2003 the DMRZ reached a computing capacity of 3,000 gigaflops?—?around 3,000 billion computing operations per second, equating approximately to the power of 20,000 PCs. In the final construction stage in 2012 Michael Jonas will make a power of 50 teraflops available to its users. This is around 50,000 billion computing operations per second — the performance of around 400,000 PCs. Whoever wants to compute so much also needs a lot of electricity to do this: even today the systems, including the required cooling, allow themselves a current draw of a good 600 kilowatts. A value that will increase to roughly 2,000 kilowatts by 2012.

For a good weather forecast the modern meteorologist needs a lot of experience and a great deal of measured data, as well as one thing above all: computing power! Numeric weather models cover the globe with a finely meshed mathematical grid. The finer this digital fishnet stocking, the more realistic the results — and the greater the hunger for power. A hunger, however, that Michael Jonas can not quench without limits. As he is not only responsible for the technology, but also for the budget, he must keep an eye on the cost-effectiveness of the computer centre. This also applies to the cooling for the two computer centre rooms. In fact, the computers would prefer to be a few degrees cooler; the value of 22 degrees is a compromise between the current drain and the availability and reliability of the systems. On an area of over 1,000 square metres computers line up next to computers, racks to racks, cabinets to cabinets, and it is continuously expanding. As a comparison: in 2003 the DMRZ reached a computing capacity of 3,000 gigaflops?—?around 3,000 billion computing operations per second, equating approximately to the power of 20,000 PCs. In the final construction stage in 2012 Michael Jonas will make a power of 50 teraflops available to its users. This is around 50,000 billion computing operations per second — the performance of around 400,000 PCs. Whoever wants to compute so much also needs a lot of electricity to do this: even today the systems, including the required cooling, allow themselves a current draw of a good 600 kilowatts. A value that will increase to roughly 2,000 kilowatts by 2012.

Current in, heat out

What comes in a current, must then go out as heat, according to a rule of thumb. Different factors must therefore be taken into account when planning the air conditioning for the computer centre: in addition to room size, current draw, redundancy and thermodynamics, the energy efficiency plays a growing role. The DMRZ relies on a cold-water pump circuit system (5,000-litre reservoir in the cellar) as well as forced cooling with Stulz Cyber-Air precision air-condition systems. In the cold months external air is fed in for cooling. As the external temperature sinks, the load for the compressors in the air-conditioning systems also sinks. Intelligent standby management reduces this load even further. It distributes the stored reserve capacities equally to all systems which then run in the partial load range and thus very economically. Further savings potentials are provided by the fans in the CyberAir air-conditioning systems. Just like their big “colleagues” on the roof, they are also powered by electronically controlled EC direct current motors and deliver precisely the air flow that is used. Not less, but also no more. They adapt without variation to all power requirements and run very efficiently in the partial load range.

What comes in a current, must then go out as heat, according to a rule of thumb. Different factors must therefore be taken into account when planning the air conditioning for the computer centre: in addition to room size, current draw, redundancy and thermodynamics, the energy efficiency plays a growing role. The DMRZ relies on a cold-water pump circuit system (5,000-litre reservoir in the cellar) as well as forced cooling with Stulz Cyber-Air precision air-condition systems. In the cold months external air is fed in for cooling. As the external temperature sinks, the load for the compressors in the air-conditioning systems also sinks. Intelligent standby management reduces this load even further. It distributes the stored reserve capacities equally to all systems which then run in the partial load range and thus very economically. Further savings potentials are provided by the fans in the CyberAir air-conditioning systems. Just like their big “colleagues” on the roof, they are also powered by electronically controlled EC direct current motors and deliver precisely the air flow that is used. Not less, but also no more. They adapt without variation to all power requirements and run very efficiently in the partial load range.

Controlled Air Traffic

The cold air generated by the air-conditioning systems is directed down into the raised floor and then along to racks and computer cabinets. The “cold-aisle/hot-aisle containment” principle provides an optimum cooling circuit. In a cold-aisle the cool air from the air-conditioners is passed through the perforated floor plates and drawn-in by the computer fans. The heated air then flows into the opposite hot-aisle, rises to the ceiling and flows back to the air-conditioner. A water-glycol mixture absorbs the excess heat and transfers it to the heat exchangers on the building roof. In the cool months the heat exchangers don’t have much to do, the amount of waste heat is nearly zero. The reason: the heat is extracted from the coolant by a heat pump and is then used for heating the German Weather Service office areas totalling over 22,000 square metres. The computers therefore not only supply the much longed-for computing power to the meteorologists and scientists, they also simultaneously provide a cosy atmospheric environment.

The cold air generated by the air-conditioning systems is directed down into the raised floor and then along to racks and computer cabinets. The “cold-aisle/hot-aisle containment” principle provides an optimum cooling circuit. In a cold-aisle the cool air from the air-conditioners is passed through the perforated floor plates and drawn-in by the computer fans. The heated air then flows into the opposite hot-aisle, rises to the ceiling and flows back to the air-conditioner. A water-glycol mixture absorbs the excess heat and transfers it to the heat exchangers on the building roof. In the cool months the heat exchangers don’t have much to do, the amount of waste heat is nearly zero. The reason: the heat is extracted from the coolant by a heat pump and is then used for heating the German Weather Service office areas totalling over 22,000 square metres. The computers therefore not only supply the much longed-for computing power to the meteorologists and scientists, they also simultaneously provide a cosy atmospheric environment.

Discover more:

ebm-papst in data centres

Cool solutions for hot technology.

Leave a comment